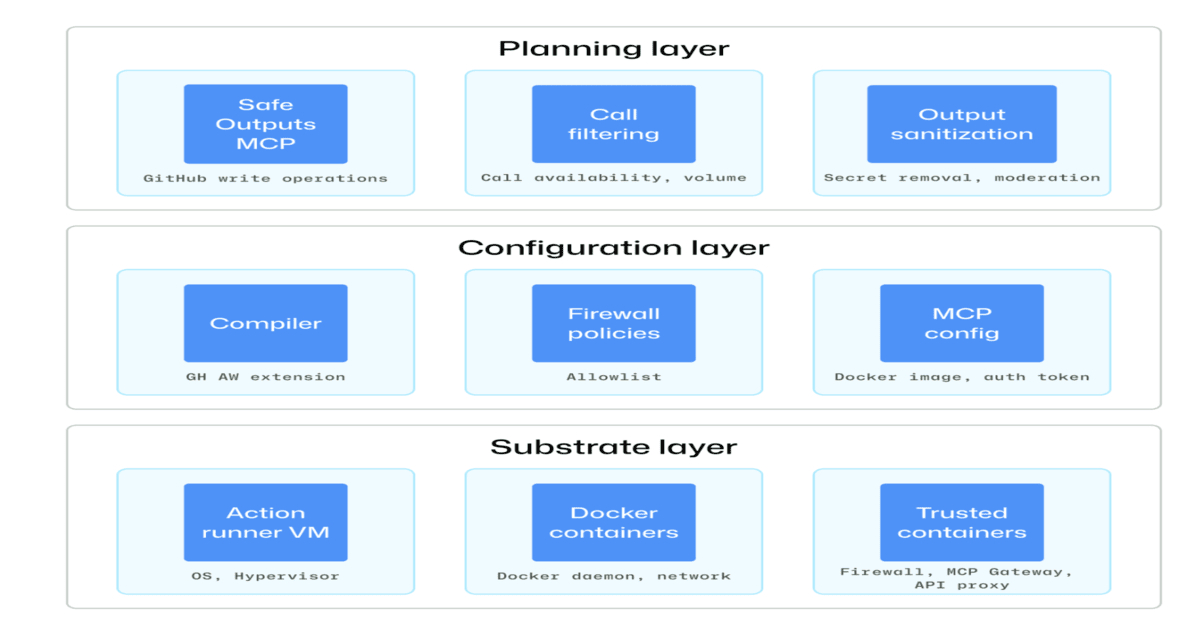

"GitHub has detailed the security architecture behind its agentic workflows, outlining a defense-in-depth approach to safely integrate autonomous AI agents into CI/CD pipelines. The design emphasizes isolation, constrained execution, and auditability to mitigate risks introduced by AI-driven automation."

"At the core of GitHub's design is a layered model built on isolation. Agents run in sandboxed, ephemeral environments with tightly restricted permissions, preventing persistence and limiting potential blast radius. Workflows operate in read-only mode by default, and any write operation must pass through controlled safe outputs, such as pull requests or issue comments, ensuring that all changes remain transparent, reviewable, and subject to approval before being applied."

"A key principle is preventing secret exposure to agents. In shared runner environments, agents can access environment variables, configuration files, and runtime state, making prompt injection a"

Agentic workflows extend CI/CD by letting AI agents interpret intent, make decisions, and execute tasks in GitHub Actions. This increases productivity but expands the attack surface through prompt injection, privilege escalation, and unintended actions. GitHub’s security architecture uses defense-in-depth with layered isolation. Agents run in sandboxed, ephemeral environments with tightly restricted permissions to prevent persistence and limit blast radius. Workflows default to read-only operation, and write actions require controlled safe outputs such as pull requests or issue comments so changes remain transparent, reviewable, and approval-gated. Comprehensive logging supports auditability. Preventing secret exposure is emphasized because agents in shared runner environments can access environment variables, configuration files, and runtime state.

Read at InfoQ

Unable to calculate read time

Collection

[

|

...

]