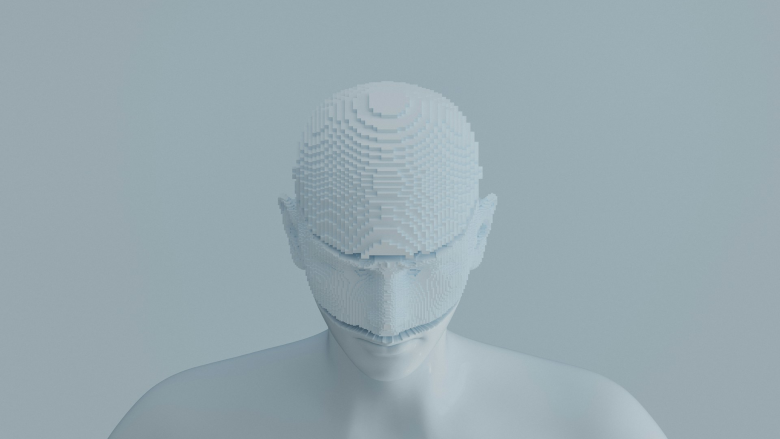

"A recent report published in the National Library of Medicine's PubMed Central database found human accuracy in identifying deepfakes can fall below 25 percent in certain conditions. As a result, the need for verification is shifting away from the individual and toward the systems designed to validate identity."

"These systems have long relied on the process of capturing a biometric input, comparing it to a stored record and confirming a match. While that model offers a broad range of use cases across government and enterprise environments from border control to digital service access, it's built on the assumption that the input being evaluated originates from a legitimate, physical source."

"One notable update is the formal classification of synthetic and morphed facial images as non-biometric content. This distinction signals that AI-generated images must be handled differently from traditional biometric data within verification workflows."

Human ability to recognize real faces is unreliable, with accuracy dropping below 25 percent when identifying deepfakes. Traditional identity verification systems rely on capturing biometric input, comparing it to stored records, and confirming matches across government and enterprise applications. However, advances in generative AI challenge this model by creating realistic synthetic media that may not originate from legitimate physical sources. Identity standards are evolving to address these risks. NIST updated its biometric data exchange standard SP 500-290e4 for the first time since 2016, formally classifying synthetic and morphed facial images as non-biometric content. This distinction requires AI-generated images to be handled differently within verification workflows.

#deepfake-detection #biometric-verification #synthetic-media #identity-standards #generative-ai-security

Read at Securitymagazine

Unable to calculate read time

Collection

[

|

...

]