"Google's TPU 8t is designed for training at a massive scale, achieving up to 2.8 times faster performance compared to last year's Ironwood TPUs, while TPU 8i focuses on reducing costs for model serving."

"The introduction of distinct network topologies in new clusters minimizes scaling losses across both inference and training, addressing the modern AI workloads that rarely run on a single accelerator."

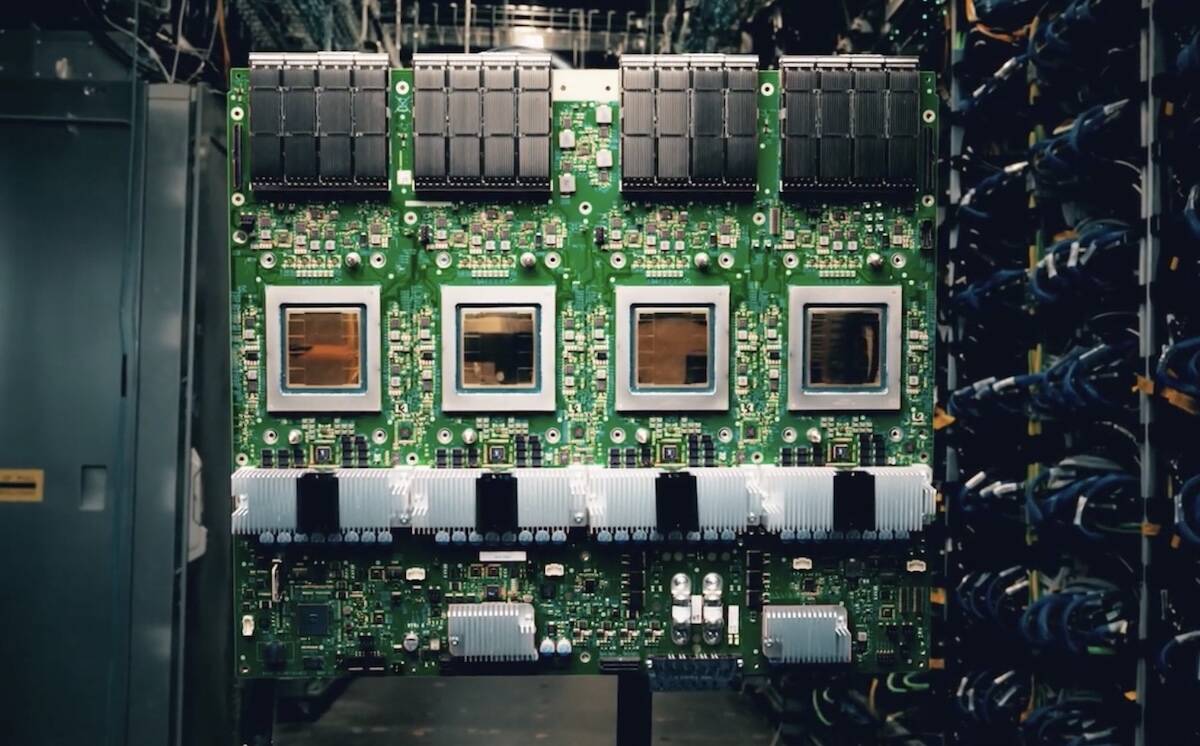

Google launched two new AI accelerators, TPU 8t for training and TPU 8i for inference, at its Cloud Next conference. The TPU 8t is up to 2.8 times faster in training and offers 80% higher performance per dollar for LLM inference compared to last year's models. This dual-tracked approach aims to eliminate bottlenecks in both workloads. Google is also transitioning to Arm-based Axion CPUs for its TPU host, enhancing efficiency in scaling AI workloads across multiple chips.

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]