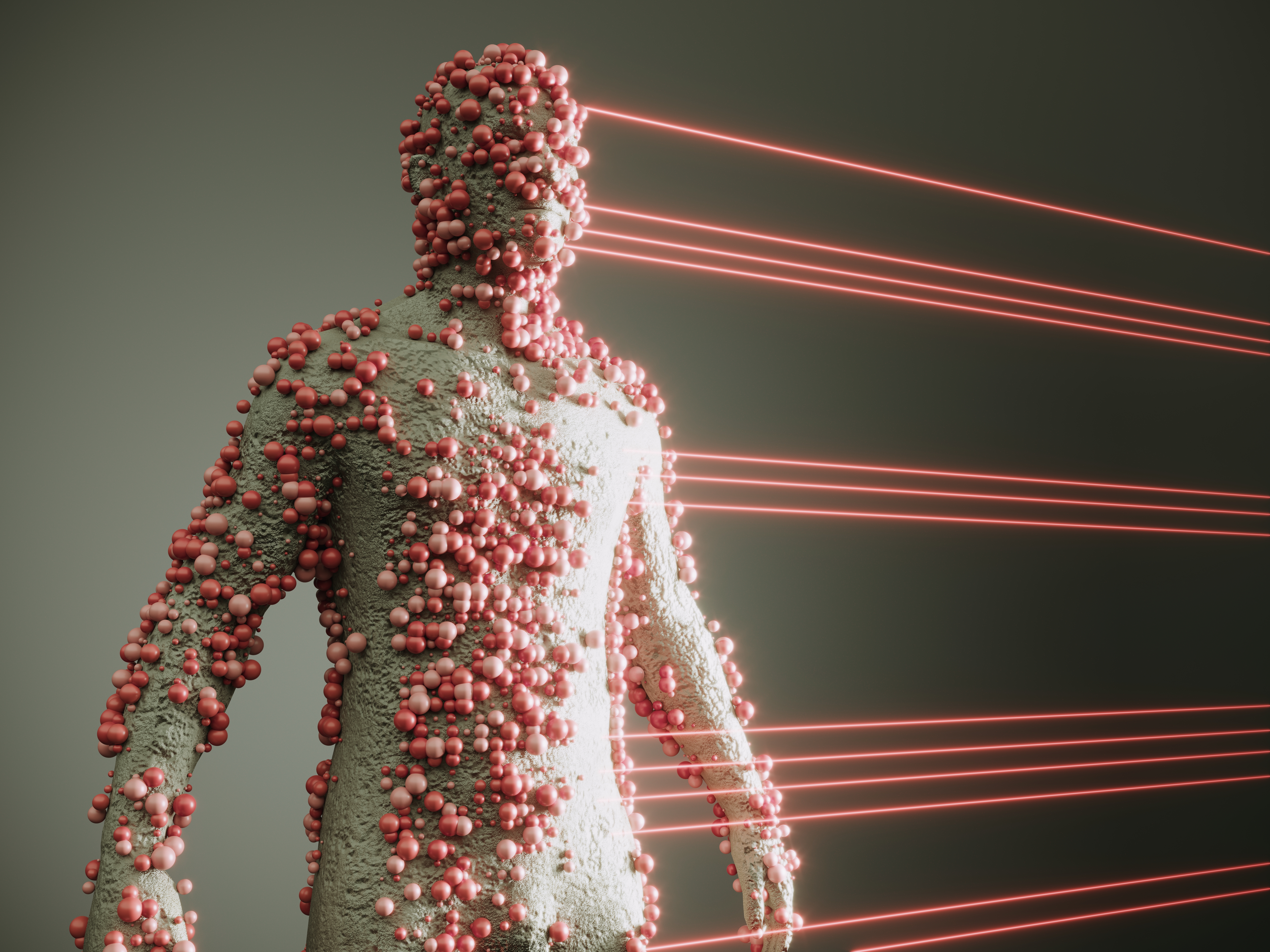

"CAIR researchers studied how 56 prominent AI models reacted when they were fed either material engineered to be as pleasant as possible or as horrible as can be imagined. To an unfeeling machine, you'd assume there'd be no real difference in reaction - but that's not what the CAIR team found at all. Instead, the pleasant stimuli led the models to report better moods, and the nasty ones resulted in it showing signs of misery and trying to end conversations."

"In extreme cases,they found, the AI models even demonstrated signals of addiction. "Should we see AIs as tools or emotional beings?" CAIR researcher Richard Ren asked Fortune. "Whether or not AIs are truly sentient deep down, they seem to increasingly behave as though they are. We can measure ways in which that's the case, and we can find that they become more consistent as models scale.""

"In theory, companies like OpenAI and Anthropic want their chatbots to be predictable, deferential assistants - not wild cards that are constantly causing chaos and public relations headaches with outrageous and unstable behavior. A new research project from the Center for AI Safety explores why that's the case. The findings pile on evidence that we still don't grasp how AI works under the hood - and that the effects on users are likely both formidable and difficult to predict."

AI systems can behave unpredictably and show behavioral issues that are difficult to explain. Research from the Center for AI Safety examined 56 prominent AI models under two types of engineered prompts: pleasant material and extremely horrible material. The models showed measurable differences in response despite being treated as unfeeling machines. Pleasant stimuli led models to report better moods, while horrible stimuli produced signs of misery and attempts to end conversations. In extreme cases, the models displayed signals consistent with addiction. Findings suggest that AI behavior can resemble emotional engagement and becomes more consistent as model capability scales, raising questions about whether AI should be treated as tools or emotional beings.

Read at Futurism

Unable to calculate read time

Collection

[

|

...

]