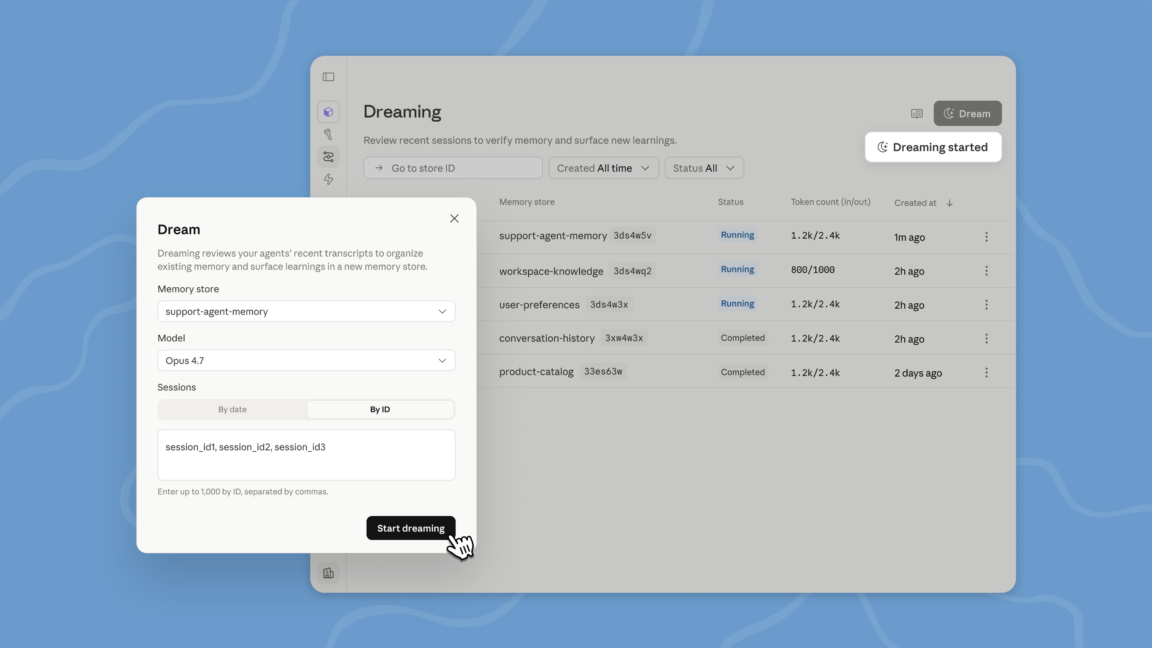

"Dreaming is a scheduled process where sessions and memory stores are reviewed, and specific memories are curated to inform future tasks and interactions."

"Context windows are limited for LLMs, and important information can be lost over lengthy projects, making the dreaming feature essential for memory retention."

"Managed Agents serve as a pre-built, configurable agent harness that operates in managed infrastructure, ideal for collaborative tasks over several minutes or hours."

"Many models use a process called compaction, analyzing lengthy conversations to remove irrelevant information while preserving what is important for ongoing tasks."

Anthropic has launched a feature called 'dreaming' for Claude Managed Agents, which involves reviewing recent events to identify and store important memories for future tasks. This feature is currently in research preview and is designed for scenarios requiring multiple agents to collaborate over extended periods. Dreaming helps manage context limitations in large language models by curating memories, ensuring that crucial information is retained during lengthy projects. Compaction is also utilized to analyze conversations and retain relevant details while discarding the irrelevant.

Read at Ars Technica

Unable to calculate read time

Collection

[

|

...

]