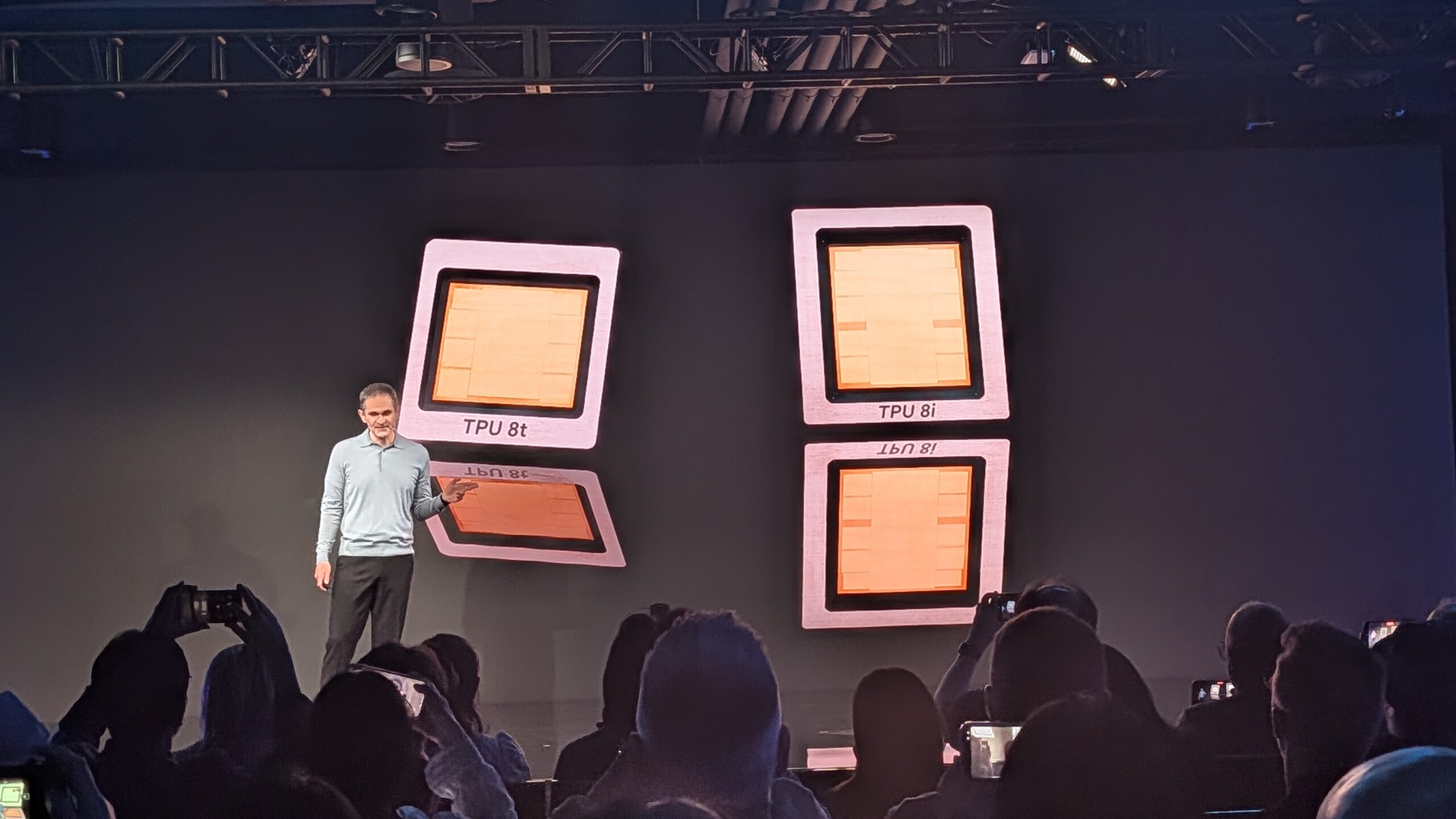

"The TPU 8t is built for one thing: training frontier models as quickly as possible. Google claims that this can reduce the development cycle for large models from months to weeks."

"A single TPU 8t superpod can now contain 9,600 chips, which is comparable to Ironwood; however, this new superpod delivers nearly three times as much computing power: 121 exaflops."

"The rationale behind this split is logical. Training and inference are fundamentally different workloads with different requirements. Training demands maximum computing power, massive scalability, and high bandwidth."

"Inference, on the other hand, requires low latency, more HBM capacity per pod, and efficient real-time communication between large numbers of chips."

Google Cloud Next 2026 unveiled its 8th-generation TPUs, featuring TPU 8t for training and TPU 8i for inference. This marks a significant advancement in AI infrastructure, providing alternatives to Nvidia. The TPU 8t is designed for maximum computing power, reducing model development cycles from months to weeks, while the TPU 8i focuses on low latency and efficient real-time communication. Each chip is tailored to meet the distinct demands of training and inference workloads, showcasing Google's commitment to enhancing AI capabilities.

Read at Techzine Global

Unable to calculate read time

Collection

[

|

...

]